The artificial intelligence landscape is rapidly evolving. A new class of database technology has emerged for advanced AI applications: Vector Databases. These specialized systems are designed to store, manage, and query high-dimensional data vectors.

These vectors are numerical representations of complex objects. This includes images, text, audio, and user preferences. Understanding Vector Databases is crucial for anyone building next-generation AI systems.

Traditional relational databases excel at structured data and exact matches. However, they struggle immensely with nuanced, contextual AI queries. This is where vector systems step in. They enable "similarity search."

This allows systems to find data points that are conceptually similar. These are not just identically matched results. This capability unlocks entirely new paradigms for interacting with information. It moves beyond simple keywords to true meaning.

What Are Vector Databases and Why Are They Essential?

At its core, a Vector Database is optimized for storing and querying vector embeddings. These embeddings are dense, numerical representations. They are generated by machine learning models. Each number in the vector represents a specific feature or attribute of the data.

For instance, a text string like "golden retriever" transforms into a vector. Certain dimensions in this vector capture "dog," "furry," and "friendly."

The "why essential" part for these databases lies in their ability to bridge the gap. This gap is between human understanding and machine processing. When you ask an AI system a question, you often seek a contextually relevant answer. You are not always looking for an exact keyword match.

Such databases facilitate this by comparing your query's vector to stored data vectors. They then find the closest matches based on mathematical distance. This capability is indispensable for a wide range of AI applications.

Imagine searching for images that look "similar" to a given picture. This works even if they don't share the same tags. Or find documents that convey a similar sentiment. This happens regardless of the specific words used. These intelligent systems make such sophisticated queries possible and efficient at scale.

How Vector Databases Work: Embeddings and Similarity Search

The operational foundation of Vector Databases rests on two primary concepts. These are vector embeddings and similarity search. These concepts work in tandem. They transform raw data into a queryable, semantic format.

Vector Embeddings: The Language of AI

Vector embeddings are numerical lists. They are often hundreds or thousands of dimensions long. Machine learning models create them. These models, like word2vec, BERT, or specialized image encoders, map complex real-world entities into a multi-dimensional space. In this space, items with similar meanings or characteristics are placed closer together.

For example, in a well-trained embedding space, the vector for "king" would be mathematically close to "queen." The relationship between "king" and "man" might be similar to "queen" and "woman." This vector arithmetic allows AI systems to understand relationships and context. This goes far beyond simple keyword matching. Creating high-quality embeddings is a critical first step for leveraging any vector database.

Similarity Search: Finding Meaningful Connections

Once data transforms into vector embeddings and stores, these systems perform similarity searches. This process involves taking a query vector. It then finds the most similar vectors in the database. Similarity is typically measured using distance metrics. These include Euclidean distance, cosine similarity, or dot product.

However, comparing every query vector to every stored vector becomes computationally infeasible. This is true with large datasets (brute-force search). To overcome this, vector databases employ Approximate Nearest Neighbor (ANN) algorithms. ANN algorithms sacrifice a small amount of accuracy. This leads to significant gains in speed and scalability.

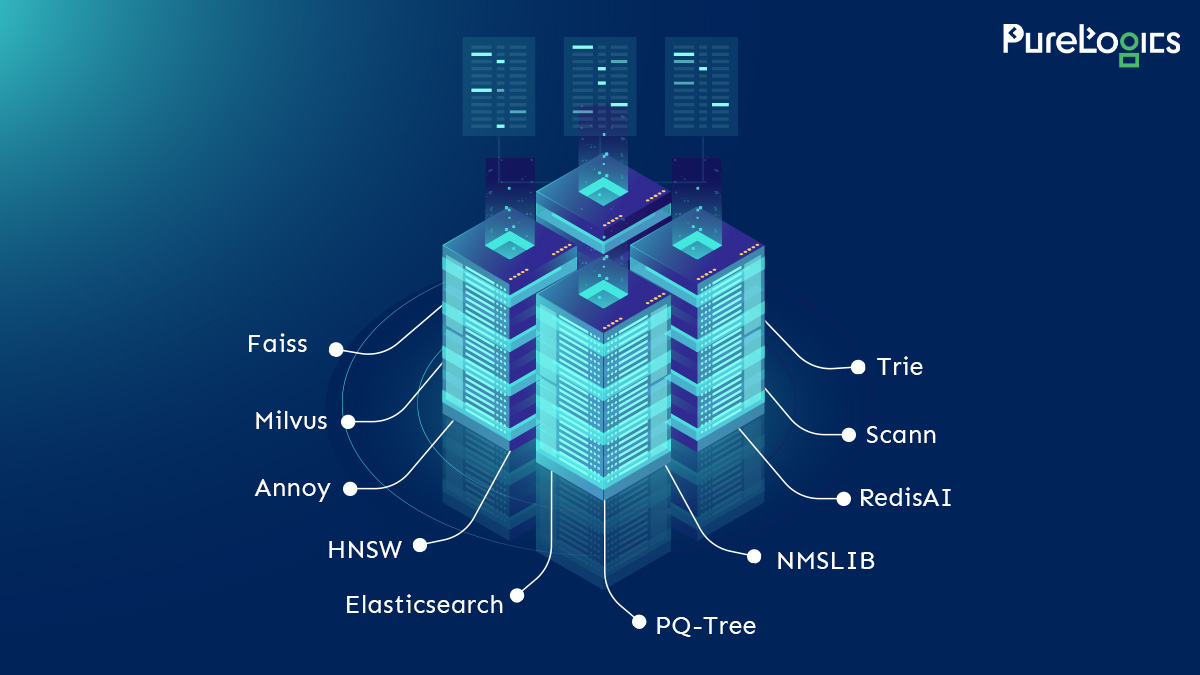

Popular ANN techniques include Hierarchical Navigable Small Worlds (HNSW), Locality Sensitive Hashing (LSH), and Product Quantization (PQ). These algorithms organize vectors into efficient data structures. This allows the database to quickly narrow down the search space. This efficiency is paramount for real-time AI applications. Such applications demand rapid responses to complex queries.

Key Components and Features of Modern Vector Databases

Modern Vector Databases offer a robust set of features. They go beyond basic storage and similarity search. They are engineered for performance, scalability, and ease of use. This is crucial in production AI environments.

- Indexing Structures: Optimized data structures (like graphs, trees, or hash tables) for fast similarity search using ANN algorithms.

- Scalability: Designed to handle billions of vectors and high query throughput, often distributed across multiple nodes.

- Filtering Capabilities: Combine vector similarity search with traditional metadata filtering (e.g., "find similar images of cats created after 2022").

- Data Management: Features for inserting, updating, deleting, and managing vector data, often with transactional support.

- High Availability and Durability: Mechanisms to ensure data persistence and continuous operation, even in the event of failures.

- API and SDK Support: Developer-friendly interfaces for integrating with various programming languages and AI frameworks.

These features collectively make Vector Databases powerful tools for AI developers. They abstract away the complexity of managing high-dimensional data. This allows focus on building intelligent applications. Leading vector database solutions are continuously innovating. They offer more advanced features.

Common Use Cases for Vector Databases

The applications for Vector Databases are vast. They continue to grow as AI adoption expands. Here are some of the most impactful use cases:

- Semantic Search: Go beyond keyword matching. Users can search for "red sports car" and get results for a "scarlet convertible." This is because the vectors are semantically close.

- Recommendation Systems: Recommend products, movies, or content. This is based on user preferences and past interactions. If a user likes a certain movie, similar movies (in vector space) can be suggested.

- Generative AI and RAG (Retrieval Augmented Generation): Enhance large language models (LLMs). This is done by providing external, up-to-date, and domain-specific knowledge. LLMs can retrieve relevant information from a vector database before generating a response. This significantly reduces hallucinations. For more on RAG, check out this overview of Retrieval Augmented Generation.

- Anomaly Detection: Identify unusual patterns or outliers in data. Vectors far from the majority of other vectors might indicate fraudulent activity or system failures.

- Image and Video Search: Find visually similar images or video segments. This works without relying on manual tagging.

- Duplicate Detection: Identify duplicate or near-duplicate items across large datasets. This is useful for content moderation or data cleaning.

Each of these applications leverages the ability of these systems. They understand and compare the intrinsic meaning of data. This transforms how businesses build and deploy intelligent systems.

Comparing Vector Databases to Traditional Databases

It is important to understand that Vector Databases are not replacements for traditional databases. Instead, they complement them. They handle a specific type of data and query. Here's a quick comparison:

| Feature | Traditional Relational Database (e.g., PostgreSQL) | Vector Databases (e.g., Pinecone, Milvus) |

| Primary Data Type | Structured, tabular data (rows/columns) | High-dimensional vectors (numerical arrays) |

| Primary Query Type | Exact matches, range queries, aggregations (SQL) | Similarity search (Approximate Nearest Neighbor) |

| Use Case Focus | Transactional processing, data analytics, reporting | Semantic search, recommendations, AI applications |

| Schema Flexibility | Strict, predefined schema | Flexible, schema-on-read for vector metadata |

| Scalability for AI | Limited for high-dimensional similarity | Designed for massive scale of vector data |

Many modern AI architectures combine both types of databases. A relational database might store user profiles and metadata. Meanwhile, a vector database stores the embeddings of their content and preferences. This hybrid approach offers the best of both worlds.

Choosing the Right Vector Databases for Your Project

Selecting an appropriate Vector Databases involves considering several factors. These are relevant to your specific application and infrastructure. Many robust options are available. These include both open-source and managed services.

Key Considerations:

- Scalability Requirements: How many vectors do you anticipate storing? What is your expected query load?

- Performance Needs: What latency is acceptable for your similarity searches?

- Cost: Evaluate pricing models for managed services or the operational costs for self-hosted solutions.

- Deployment Model: Do you prefer a fully managed cloud service or an on-premise/self-hosted solution?

- Integration Ecosystem: How well does the database integrate with your existing AI frameworks, programming languages, and cloud providers?

- Features: Do you need advanced filtering, data lifecycle management, or specific ANN algorithms?

- Community and Support: For open-source options, a strong community is vital. For commercial offerings, support plans are important.

Popular choices include Pinecone, Milvus, Qdrant, Weaviate, and Vespa. Each has strengths in different areas. Careful evaluation based on your project's needs is essential. The landscape of these specialized databases is rapidly evolving. New innovations are continually emerging.

The Future of Vector Databases in AI

The trajectory for Vector Databases is clearly upward. AI models are becoming more sophisticated. Data volumes are exploding. The need for efficient, semantic information retrieval will only intensify. We can expect several trends to shape their future:

- Deeper Integration with LLMs: Further optimization for RAG architectures and integration with large language models will be paramount.

- Multimodal Capabilities: Enhanced support for combining and querying vectors from different modalities (text, image, audio) seamlessly.

- Advanced Indexing and Algorithms: Continued research into even faster and more memory-efficient ANN algorithms.

- Managed Services Growth: More robust and feature-rich managed vector database services will simplify deployment and maintenance for developers.

- Standardization: Efforts to standardize APIs and query languages may emerge, making it easier to switch between providers.

Vector Databases are no longer a niche technology. They are becoming foundational infrastructure for nearly every cutting-edge AI application. Their ability to infuse AI with a deeper understanding of context and meaning makes them indispensable.

In conclusion, Vector Databases represent a pivotal shift. They change how we manage and interact with data in the age of AI. They transform raw information into rich, queryable embeddings. This empowers applications to move beyond simple pattern matching. It leads to genuine semantic understanding. For any organization looking to leverage the full potential of machine learning, mastering the principles and applications of these powerful systems is not just an advantage—it's a necessity.

Comments